Test your voice agent

ElevenLabs voice agent testing guide: what to check before going live

The platform powers some of the most natural-sounding voice agents available today. Its Flash model reaches ~75ms model inference time on TTS synthesis — the fastest in its category. Its voice library spans 3,000+ options. Its Conversational AI product handles the full agent stack from STT through to telephony.

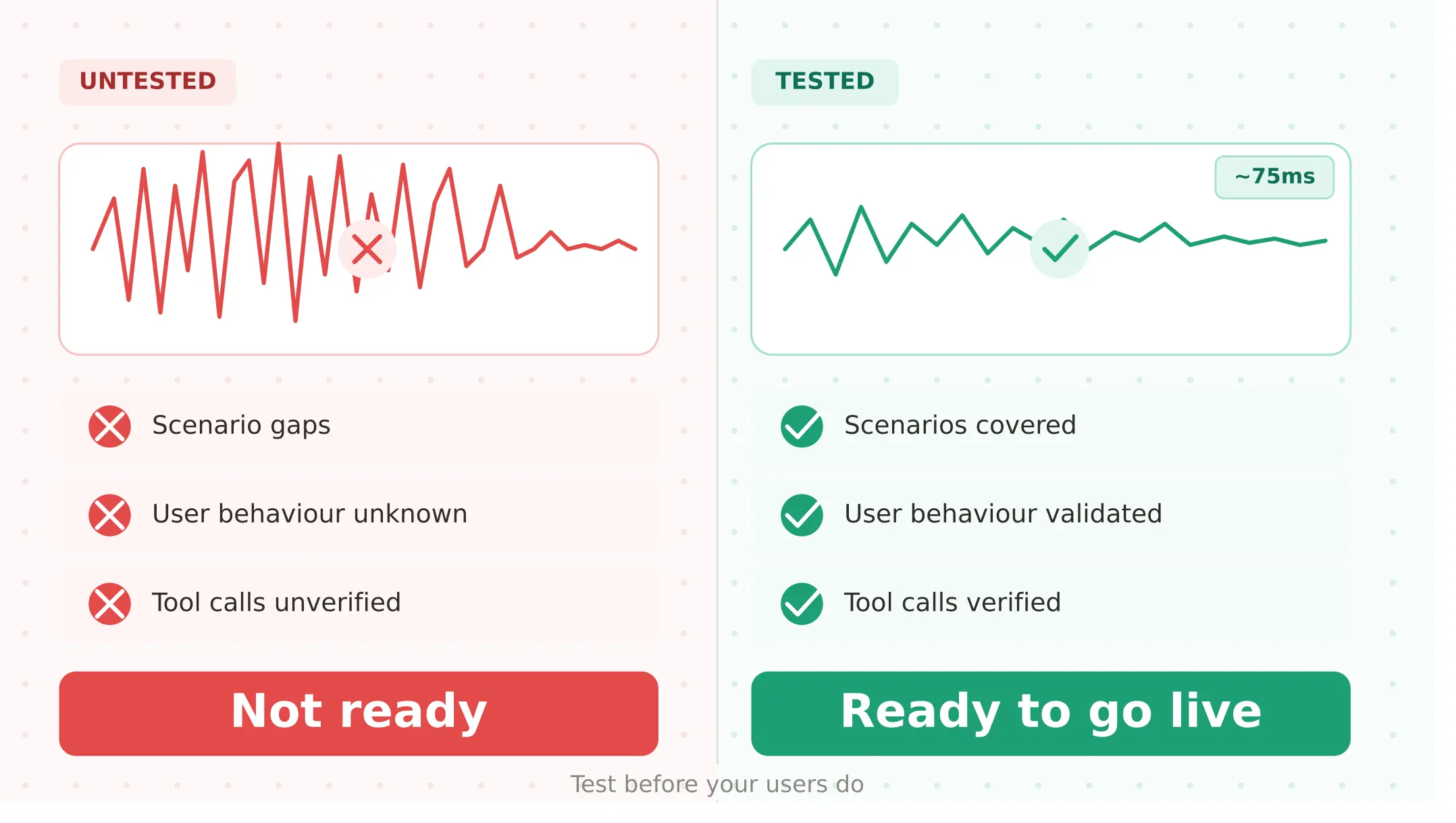

And yet teams consistently hit production problems that no demo prepared them for. A voice agent built on the Conversational AI platform — or using the TTS API inside a Vapi, Retell, or LiveKit stack — has specific failure modes that only emerge at scale, under real acoustic conditions, or when components interact in ways that controlled testing never replicates.

None of these failures show up in demos. All of them show up in production.

This guide covers what to test in your integration before you go live — and what to monitor once you do.

What ElevenLabs actually is in 2026

The company offers two distinct products, and understanding the difference matters for how you test.

ElevenLabs TTS API is a text-to-speech service and the most widely used ai tts API for voice agents. You send text, it returns audio — integrated into Vapi, Retell, LiveKit, Pipecat, and dozens of other frameworks. The primary models are Flash v2.5 (~75ms inference, optimised for real-time conversation), Turbo v2.5 (higher quality, higher latency), and Multilingual v2 (best quality, 29 languages). The elevenlabs voice cloning capability — accessible via the voice cloning api — lets teams clone a brand voice from a five-minute sample, available across all paid tiers.

ElevenLabs Conversational AI is a complete voice agent platform launched in 2024. It includes conversation management, LLM integration (GPT-4o, Claude, and others), STT, TTS, and telephony — all in one product. Teams building on it do not need a separate Vapi or Retell account.

Both products share the same underlying TTS infrastructure and the same production constraints. Whether you are using the TTS API as a component inside another platform or building on the full Conversational AI stack, the testing requirements below apply.

ElevenLabs model and pricing overview

Understanding elevenlabs pricing matters for production planning because character budgets and concurrent call limits are pricing-tier functions, not absolute platform capabilities.

According to the latency documentation, the ~75ms Flash figure refers to model inference time only — actual end-to-end tts latency varies with location and endpoint type.

| Model | Inference speed | Quality | Languages | Best for |

|---|---|---|---|---|

| Flash v2.5 | ~75ms | Good | 32 | Real-time voice agents — recommended production default |

| Turbo v2.5 | ~150ms | Better | 32 | Quality-first deployments |

| Multilingual v2 | ~300ms | Best | 29 | High-quality multilingual deployments |

| Eleven v3 | ~300ms | Expressive | 70+ | Character voices, expressive dialogue |

Pricing tiers (verified April 2026):

| Tier | Monthly cost | Characters included | Best for |

|---|---|---|---|

| Free | $0 | 10,000 | Development and API testing only |

| Starter | $6 | 30,000 | Small-scale testing, commercial rights unlocked |

| Creator | $22 | 100,000 | Professional voice cloning |

| Pro | $99 | 500,000 | Production-scale conversational AI |

| Scale | $299 | 2,000,000 | High-volume product teams |

| Business | $990 | 11,000,000 | Large-scale enterprise deployments |

According to the latency optimisation guide, enterprise customers benefit from increased concurrency limits and priority access to the rendering queue. For non-enterprise tiers, Agents supports burst pricing — temporarily exceeding subscription concurrency limits up to 3× normal capacity, capped at 300 concurrent calls, at double the standard rate.

Verify current limits at elevenlabs.io/pricing before making tier decisions.

Five ways ElevenLabs voice agents fail before they reach users

1. Scenario gaps — does your agent handle every flow it was built for?

The first question before any ElevenLabs voice agent goes live is whether it reliably handles every scenario it was designed for: appointment booking, balance inquiry, cancellation, escalation, and every other flow in scope.

Voice introduces failure modes that text-based scenario testing misses entirely. A scenario that passes via direct API call can fail when the TTS layer mispronounces a key product name, clips the end of a confirmation sentence, or when the STT layer misinterprets a user reply to a synthesised question. The voice layer adds acoustic variability that text testing does not replicate.

Before running your full scenario suite, verify your TTS layer is operational: call the text-to-speech endpoint with your voice ID and API key, request Flash model synthesis with your standard voice settings, and confirm the audio output returns correctly. A 422 response means character budget is exhausted. A 401 means the API key is invalid. Both must be resolved before running scenario evaluation.

Evalgent's caller profiles let you define each flow with explicit objectives and success criteria — not just "did the conversation complete" but "did the agent correctly capture the appointment date, confirm the right location, and trigger the booking API call." Run your full scenario suite against the integration before launch. Any scenario that fails in evaluation will fail in production. Any scenario you have not run has an unknown reliability.

2. User behaviour coverage — does it hold up when real users don't follow the script?

Scenario testing tells you the agent works when users behave as expected. User behaviour coverage tells you what happens when they do not — which is most of the time in production.

Real users interrupt mid-sentence. They speak with regional accents. They call from noisy environments. They speak at 1.4× average rate when in a hurry. They restart sentences and say "actually, wait." For agents using ElevenLabs TTS, elevenlabs barge-in handling voice agent behaviour is the most common failure point under real user conditions. The platform uses streaming TTS — audio is sent token-by-token as generated. When a user interrupts mid-sentence, the in-flight stream must cancel, playback must stop, and context must update. If any step fails, users experience audio bleed or context loss.

According to Deepgram's TTS comparison research, Flash v2.5 leads on latency at ~75ms, but real-time voice agents require sub-300ms total TTFB for natural conversation flow — and interruption handling adds latency beyond the TTS inference time alone.

Evalgent's caller profiles configure 8 behavioural parameters per synthetic caller: accent, background noise level, speech pace, latency, interruption timing, disfluency rate, speaking volume, and emotional register. This is how to test elevenlabs conversational ai before going live against the actual distribution of your users — not a single clean tester. Run barge-in at three interruption points: early (200ms after agent begins), mid-sentence, and rapid successive. Run noise profiles at 45 dB (quiet), 65 dB (open office), and 75 dB (street traffic). Track task completion rate and barge-in recovery rate separately per profile.

3. Tool calls and handovers — does the agent actually do what it promises?

This is the most commercially important failure mode to test, and the one most teams discover through user complaints rather than pre-launch evaluation.

When a Conversational AI agent says "I've booked your appointment for Thursday at 2pm" — did the booking API receive and confirm that request? When it says "Let me transfer you to our billing team" — did the transfer execute? The transcript shows a successful conversation. The task may not have completed.

This is the core problem that LLM-as-judge evaluation misses: text scoring evaluates what was said, not what was done.

For Conversational AI agents, tool calls are configured as webhooks or function definitions. The failure modes include: webhook returning a non-200 status with no agent recovery, tool call timeout with the agent proceeding as if the task completed, incorrect parameter extraction when TTS mispronounced a key field and STT misheard it, and missing handover triggers when escalation conditions are met but the handover logic does not fire. When your character budget is exhausted mid-call, the TTS layer returns a 422 error — and if that error is unhandled, the agent produces silence instead of a fallback message. Test this scenario deliberately: simulate budget exhaustion and verify the agent recovers gracefully rather than going silent.

Evalgent verifies tool call outcomes against expected downstream system state — not just whether the agent said the right words. For each scenario, define the expected tool call, expected parameters, and expected downstream state. Evalgent flags divergence between what the agent said and what actually happened, turning tool call verification from a manual spot-check into a systematic pre-launch gate.

4. Concurrent call capacity — does it survive peak traffic?

Concurrent call limits are a pricing-tier constraint, not an absolute platform capability. The failure pattern is non-obvious in testing: your agent performs correctly with one or two simultaneous calls. The limit only manifests at production volumes — during peak hours, campaign launches, or when multiple agent instances share the same elevenlabs api key.

For teams running outbound campaigns or high-inbound contact centre deployments, the elevenlabs concurrent calls ceiling becomes a hard limit at the worst possible moment. Agents supports burst pricing up to 3× base concurrency, capped at 300 concurrent calls, at double the standard rate — but relying on burst as a normal operating mode doubles your TTS cost on those calls.

Synthetic caller load testing runs your agent at 1×, 2×, and 5× expected peak concurrent volume before launch, making your concurrency ceiling visible before users experience failures. For teams upgrading pricing tiers, a pre-upgrade load test confirms whether the new tier's limit is sufficient — at peak traffic, not average.

Monitor concurrent usage via the usage dashboard. Set alerts when usage exceeds 70% of tier limit to give time to upgrade before failures occur.

5. Voice quality regression — does it still sound right after every change?

This is the failure mode teams discover latest, because it is subtle. The agent still works. It just sounds different — clips words, changes pacing, or loses the naturalness that made users trust it.

Voice quality regression occurs after switching voices, adjusting stability or similarity boost settings, upgrading TTS model versions, or changing the text your LLM generates. None of these changes will break a functional test. All of them can degrade user experience in ways that accumulate into lower trust and higher call abandonment.

Evalgent runs your golden scenario set before and after any voice configuration change, measuring barge-in recovery rate, turn-taking latency, and repeat-utterance rate — a proxy for whether users found the voice difficult to understand. A change that passes functional testing but increases repeat-utterance rate by more than 10% relative to baseline is a voice quality regression worth catching before production. This is voice agent regression testing applied specifically to the TTS layer — a check no other evaluation tool currently performs.

ElevenLabs vs Cartesia vs Deepgram Aura-2: which TTS for your agent?

If you are selecting a TTS provider rather than evaluating an existing integration, the elevenlabs vs cartesia for voice agents question is the most common comparison developers face.

| Dimension | ElevenLabs Flash v2.5 | Cartesia Sonic | Deepgram Aura-2 |

|---|---|---|---|

| TTS inference latency | ~75ms | ~40ms | ~90ms |

| Voice quality | Excellent | Very good | Good |

| Voice library | 3,000+ voices | Moderate | 40+ English voices |

| Voice cloning | Yes (5-min sample) | Yes (rapid) | No |

| Languages | 32 (Flash) / 70+ (v3) | 20+ | 7 languages |

| vs OpenAI TTS | Flash faster; OpenAI has 11 presets, no cloning | — | — |

| Pricing | Higher | Mid-range | ~40% cheaper than ElevenLabs |

| Key production risk | Character budget exhaustion | Less expressive | Limited voice options |

| Best for | Quality-first, customer-facing agents | Price-performance balance | Cost-sensitive high-volume |

ElevenLabs is the right choice when voice naturalness is a primary requirement. Cartesia Sonic is the default tts api in Retell AI and a strong best text to speech api for voice agents 2026 option on a price-performance basis. Deepgram Aura-2 is approximately 40% cheaper at equivalent volume for cost-sensitive deployments. OpenAI TTS offers 11 preset voices with no cloning — suitable for single-vendor simplicity but limited where a distinct brand voice is required.

Testing ElevenLabs Conversational AI: platform-specific requirements

For teams building directly on ElevenLabs Conversational AI, knowing how to test elevenlabs conversational ai before going live requires covering several failure surfaces beyond the TTS layer.

Prompt regression after every edit. Every prompt change is a regression risk — the same regression testing approach that applies to Vapi and Retell applies here. Run your golden scenario suite after every edit.

LLM backend switching. The platform supports GPT-4o, Claude, and other backends. Switching LLMs changes response distribution, confidence patterns, and error modes. Run a full regression suite when changing the underlying LLM.

Telephony integration error handling. Dropped connections, DTMF input detection, and call transfer logic all need explicit testing. PSTN tts latency compounds with pipeline latency to produce total response times that differ from WebRTC testing by 400ms or more.

Knowledge base accuracy. Test retrieval against your top 20 expected queries before launch. Knowledge base hallucination — confident but incorrect answers — is a production reliability risk that scenario testing catches and live calls surface at high cost.

Pre-launch testing checklist for ElevenLabs voice agents

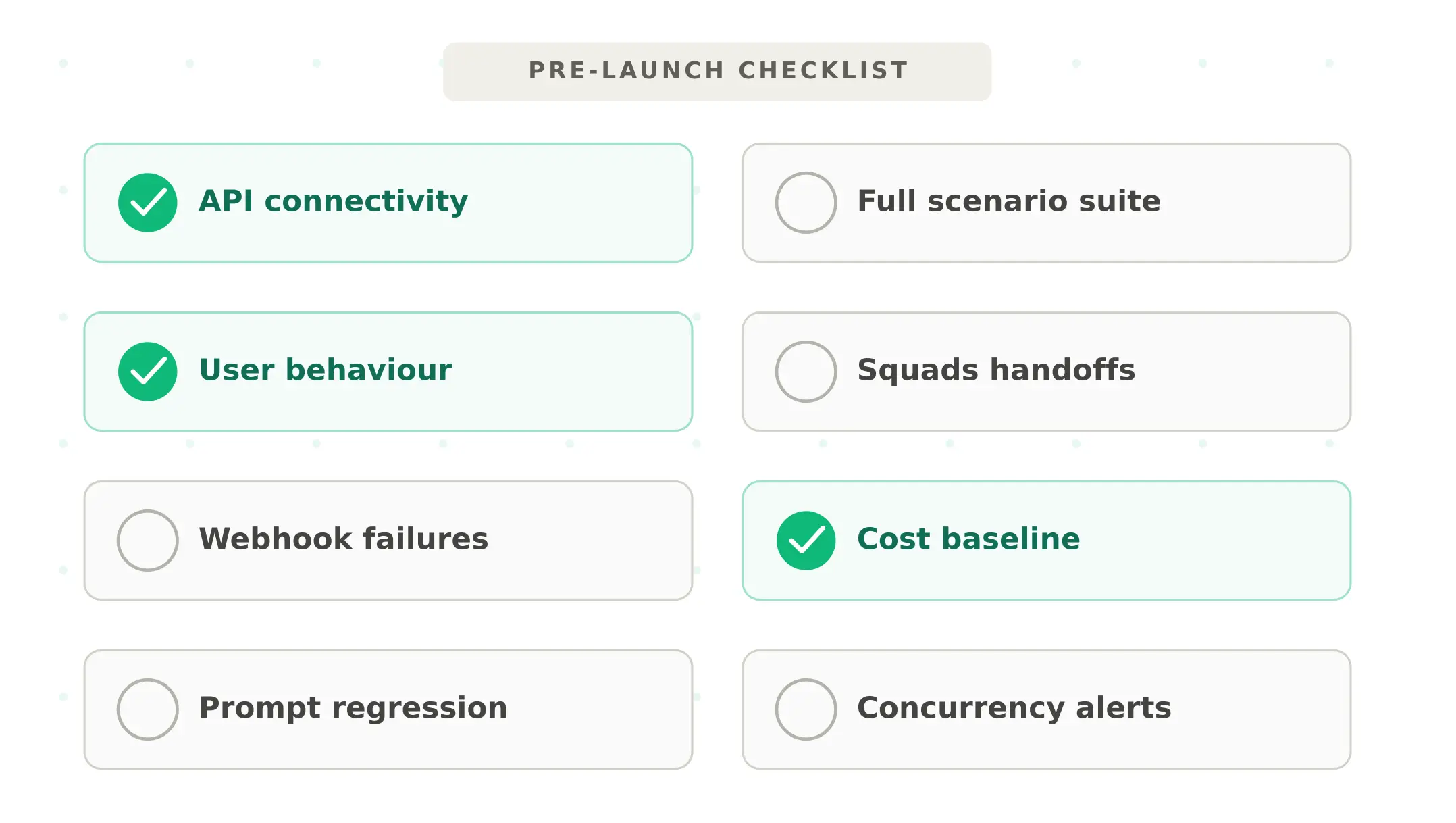

Complete every step before any voice agent built on the platform goes live:

1. Scenario coverage — Run your full scenario library end-to-end. Verify each flow produces the correct tool call, correct confirmation, and correct downstream system state. Flag any scenario with task completion rate below 90%.

2. User behaviour stress test — Run every scenario across at minimum three caller profiles: clean audio baseline, 65 dB noise, and one accent variant representative of your user base. Track barge-in recovery rate and task completion rate per profile. Apply the full stress-testing methodology to find acoustic and conversational breaking points.

3. Tool call and handover verification — For every tool call, verify the webhook receives correct parameters, returns a success response, and the agent confirms the outcome accurately. Test handover logic explicitly — both when the escalation condition fires and when it does not fire when it should.

4. Concurrent load test — Run synthetic callers at 2× expected peak concurrent volume. Verify no requests fail, P95 latency stays within range, and no 422 character budget errors appear at load.

5. Voice quality baseline — Record golden scenario suite results as a baseline. After any voice configuration change, re-run and compare. A more than 10% increase in repeat-utterance rate or barge-in rate warrants investigation before deploying.

6. Character budget projection — Run 10× expected daily call volume and measure character consumption per scenario type. Upgrade before launch if projected consumption exceeds 70% of monthly budget.

7. Error handling verification — Simulate a 422 character budget error. Verify the agent produces a clean user-facing fallback rather than silence. Repeat for STT unavailability, tool call timeout, and dropped connection.

8. P95 latency under PSTN conditions — Measure at P50, P90, and P95 under PSTN, not just WebRTC. If P95 exceeds 2 seconds, evaluate Turbo v2.5 over Flash.

Monitoring ElevenLabs in production

Understanding elevenlabs production health requires monitoring four signals, not just average latency or error rate.

Character consumption rate. Track daily consumption against monthly budget. Alert at 70% utilisation. The usage dashboard provides this data in real time.

TTS response time at P95. A P95 spike without a change in average latency often signals concurrent limit pressure before explicit errors appear. Monitor by time of day and call type. Tracking elevenlabs flash latency voice agents at P95 rather than average reveals the tail experiences that damage user trust most.

422 error rate. A single 422 is a warning. An elevated 422 rate is an emergency. Build an alert that fires on any 422 occurrence from your elevenlabs api key — if multiple agents share a key, a single budget exhaustion event affects all of them simultaneously.

Voice quality signals. A post-deployment increase of more than 10% in barge-in rate or repeat-utterance rate relative to pre-launch baseline is a regression signal. Use production monitoring to correlate TTS-layer signals with end-to-end task completion outcomes.

Summary

ElevenLabs Flash delivers ~75ms inference time and 3,000+ voices — the strongest combination of voice quality and speed available for production voice agents in 2026. Realising those advantages in production requires systematically testing five things before launch: scenario coverage, user behaviour under real acoustic conditions, tool call and handover correctness, concurrent call capacity, and voice quality regression after every change. Every failure mode covered here is catchable before it reaches users.

Frequently asked questions

What is ElevenLabs Flash and how fast is it?

Flash is the platform's low-latency TTS model for real-time voice agents. According to the documentation, it delivers ~75ms inference time for short inputs under normal conditions — the fastest in its category. Cartesia Sonic achieves ~40ms TTFB and Deepgram Aura-2 ~90ms. OpenAI TTS averages ~250ms. The 75ms refers to inference time only; end-to-end latency varies with pipeline architecture, location, and input length.

What is ElevenLabs character budget exhaustion?

The elevenlabs character limit is reached when a voice agent consumes all characters in its pricing tier. The API then returns a 422 error on every TTS request until the budget resets or the plan is upgraded. If unhandled, the agent fails silently. The elevenlabs character budget exhaustion production scenario is the most common post-launch surprise — monitor daily consumption and alert at 70%.

How do I test elevenlabs conversational ai before going live?

Run five checks: verify scenario coverage end-to-end, test user behaviour across accent and noise profiles, verify every tool call and handover fires correctly with correct parameters, run concurrent load at 2× peak volume, and establish a voice quality baseline to detect regressions after configuration changes. Use Evalgent's scenario library and caller profiles to run all five systematically.

How does ElevenLabs handle barge-in interruptions?

The platform uses streaming TTS — audio is sent token-by-token as generated. When a user interrupts, the in-flight stream must cancel, playback must stop, and context must update simultaneously. ElevenLabs Conversational AI handles this internally. For elevenlabs tts inside Pipecat or LiveKit, the integration framework manages barge-in. Test at early (200ms after agent begins), mid-sentence, and rapid successive interruption points before launch.

How does ElevenLabs compare to Cartesia for voice agents?

Flash achieves ~75ms inference time versus Cartesia Sonic's ~40ms TTFB. ElevenLabs offers 3,000+ voices versus Cartesia's more limited library, with superior voice naturalness. Pricing is higher — Cartesia is the default tts api in Retell AI and is the better price-performance choice for most mid-volume deployments. Choose ElevenLabs when voice quality is the primary requirement; choose Cartesia when cost efficiency matters more.

What are the elevenlabs concurrent call limits?

Agents supports burst pricing — temporarily exceeding base concurrency up to 3× normal capacity, capped at 300 concurrent calls, at double the standard rate for burst calls. For production deployments, test at 2× expected peak concurrent volume before launch. Monitor via the usage dashboard and set alerts at 70% of tier limit to allow time for a tier upgrade before failures occur.

What is ElevenLabs Conversational AI?

The platform's Conversational AI product launched in 2024. Unlike the standalone tts api, it includes conversation management, LLM integration (GPT-4o, Claude, and others), STT, TTS, and telephony in one product. Teams deploy full voice agents without needing a separate Vapi or Retell account. It supports multiple LLM backends and integrates with telephony via Twilio and other providers.

Is ElevenLabs good for production voice agents?

It is a strong choice where voice quality is the priority. Flash at ~75ms provides excellent real-time performance and the 3,000+ voice library covers most needs. The main risks — character budget exhaustion, concurrent limits, barge-in edge cases, and voice quality regression — are all catchable with pre-launch testing. For cost-sensitive deployments, Deepgram Aura-2 at approximately 40% lower cost is worth evaluating.

Related Articles

Conversational AI testing: the complete voice agent stress testing guide

Systematic conversational ai testing for voice agents. Find breaking points across noise, accents, interruptions, and latency before real users do.

Read more

Vapi voice agent testing guide: what to check before going live

Test your Vapi voice agent before going live. Covers BYOK costs, Squads handoff gaps, webhook failures, and prompt regression before real users find them.

Read more