Test your voice agent

Why AI voice agents fail in production (and how to prevent it)

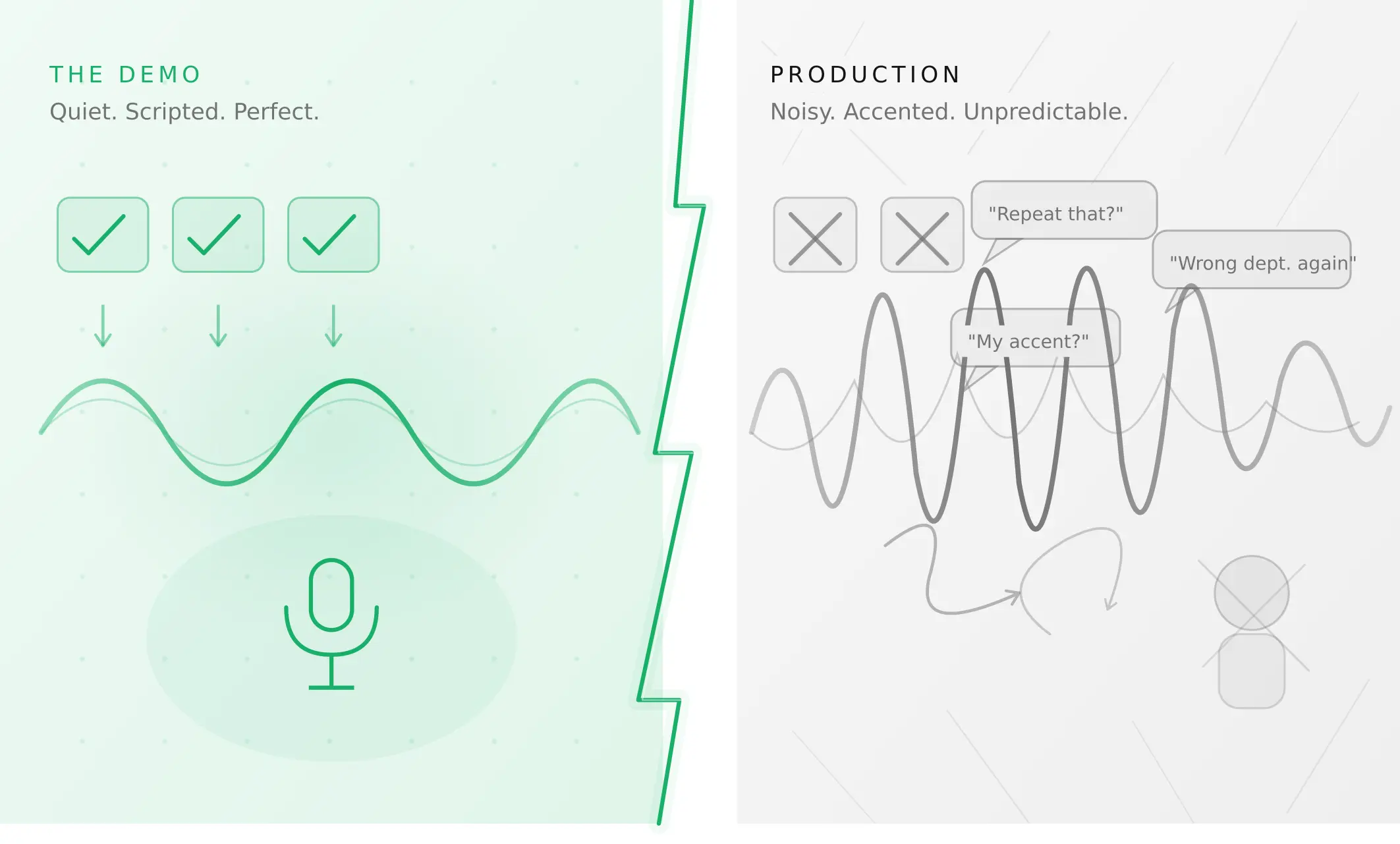

The demo went flawlessly. Your AI voice agent handled every scripted scenario — appointment bookings, balance enquiries, cancellation flows — without a single error. Stakeholders approved the launch.

By the next morning, support tickets arrived.

"It kept asking me to repeat myself."

"It transferred me to the wrong department three times."

"It couldn't understand my accent."

"I said cancel and it tried to upgrade my plan."

This is the voice agent demo vs production gap — the distance between what a controlled environment reveals and what real users actually experience. It is the most consistent pattern in production voice AI, and it is not a mystery. It is a measurement problem.

This article explains why AI voice agents fail in production, identifies the five mechanisms responsible for most AI failures in real-world deployments, and outlines what genuine voice agent production readiness testing looks like before your AI voice agent reaches users.

Why the demo environment lies to you

Demo environments are designed to showcase capability. They are not designed to test resilience. A typical demo runs in quiet conditions with a native speaker, a clean microphone, a linear conversation script, and a patient tester who waits for prompts. Every variable is controlled. Every edge case is avoided.

Production reverses every one of those assumptions.

Real users call from moving vehicles, crowded offices, and factory floors. They speak with regional accents. They interrupt mid-sentence. They ask two questions in a single breath. They correct themselves halfway through a request. They use phrases your model has never encountered. None of this appears in a demo.

The result is that AI voice agents performing at 95% accuracy in controlled environments can drop to 70% accuracy — or lower — once they encounter real-world conditions. AI in the real world behaves differently from AI in a demo, and that difference is not theoretical: every percentage point of ASR accuracy lost to noise compounds downstream through intent classification and task completion.

Recognising this gap is not pessimism. It is the first step toward building AI voice agents that actually work at scale. The teams shipping reliable AI voice agents are not the ones with the most advanced models — they are the ones who have done the work of understanding exactly where and how their agents fail before users discover it for them.

The 5 root causes of voice agent failure in production

1. Acoustic degradation

ASR accuracy is the foundation of every voice agent interaction. When it degrades, every downstream component — intent classification, response generation, task execution — degrades with it.

Most voice AI training data is recorded in clean conditions. Production audio is not. A user calling from a busy street introduces background noise that can push a model trained on studio audio from 94% word accuracy to below 75%. According to Deepgram's published benchmarks, background noise at typical contact-centre levels (55–65 dB SNR) reduces transcription accuracy by 15–30% depending on the model and codec in use (Deepgram, 2025).

The problem compounds through codec choice. Most telephony infrastructure uses narrowband audio at 8 kHz. VoIP calls add compression artefacts, packet loss, and jitter. Bluetooth connections degrade high-frequency speech. An agent tested exclusively over clean WebRTC audio will behave differently when calls arrive over PSTN — and most enterprise deployments involve PSTN.

How to test for this: Voice agent testing before deployment must include calls routed through the actual telephony stack, not just browser-based WebRTC. Inject background noise at three levels — quiet (office ambient at 45 dB), moderate (open office at 65 dB), and high (street traffic at 75 dB) — and measure ASR word error rate (WER) and task completion rate at each level. Establish the threshold at which your agent becomes unreliable, and design fallback behaviour for conditions beyond it.

2. Accent and dialect variation

English has over 160 documented regional accents and dialects. Most voice AI training datasets over-represent a small subset — broadly, standard American and received British pronunciation — and under-represent regional and non-native speaker patterns.

The effect is systematic: users whose speech patterns fall outside the training distribution experience consistently higher error rates. In practice, a 5% WER increase for one demographic can translate to a 20–30% drop in task completion rate for that group. This is not only a performance problem. It is an accessibility and equity problem that regulators in several jurisdictions are beginning to scrutinise.

The challenge goes beyond recognition accuracy. Different accent groups use different idioms, sentence structures, and implicit references. An agent that understands the words a speaker produces may still misclassify intent if it has not encountered the phrasing patterns that speaker naturally uses. Understanding how voice agents fail with accents requires looking beyond average WER to per-demographic task completion rates — a distinction that aggregate metrics routinely obscure.

How to test for this: Map the accent and dialect profiles most common in your user base — this requires looking at your actual traffic, not making assumptions. Test your agent systematically against each profile using synthetic callers parameterised with the relevant speech patterns. Track WER and intent accuracy separately by accent group. Where gaps exist, they represent both a reliability risk and a user equity issue.

3. Conversational unpredictability

Demos follow scripts. Users do not.

Real conversations include mid-sentence corrections ("I want a flight to New York — no, wait, Boston"), implicit references ("Same as last time"), multi-intent utterances ("Check my balance and also tell me when my next payment is due"), emotional escalation when something goes wrong, and repair sequences — the natural human behaviour of backtracking, clarifying, and restarting.

Voice AI models trained on clean intent-response pairs struggle with the messiness of natural dialogue. They fail to track context across turns, miss implicit references, and cannot gracefully handle the kinds of conversational repair that human agents do automatically. The result is not a catastrophic failure — it is a pattern of small degradations that frustrate users enough to escalate or abandon the interaction.

Research on real-world voice agent deployments shows that context degradation typically begins appearing at three to five conversational turns and becomes pronounced at seven to ten turns. An agent that handles a two-turn appointment booking cleanly may fail on the same task when the user changes their mind once or asks a clarifying question in the middle.

How to test for this: Scenario-based evaluation should explicitly include non-linear conversation paths — mid-flow corrections, implicit references, multi-intent utterances, and emotional escalation patterns. These cannot be captured by testing individual utterances. They require full-conversation simulation with synthetic callers that can execute branching dialogue paths. The stress-testing framework covers this in detail.

4. Edge cases at scale

A 99% task completion rate sounds excellent. At 10,000 daily conversations, that is 100 failures per day — 100 frustrated users, up to 100 potential churn events, 100 data points undermining confidence in your AI programme.

Edge cases that appear negligible during testing become statistical certainties at production scale. Unusual names that break entity extraction. Ambiguous date references ("next Friday" means a different date depending on the day of the week). Domain-specific terminology your model has not encountered. Code-switching between languages within a single call. Medical terms, financial jargon, or product names that fall outside your training distribution.

The insidious aspect of this category is that these failures are not visible in aggregate metrics. Your average accuracy remains high. Your task completion rate looks stable. The failures are concentrated in a long tail of rare-but-real inputs — and that tail grows as your user base grows. Voice agent edge cases at scale are not a future problem to address after launch; they are a pre-deployment testing requirement.

How to test for this: Voice agent evaluation before deployment needs to include explicit boundary testing for the edge cases most likely in your domain. Build a test set from actual production transcripts, not synthetic scenario designs. Where production data is not yet available, conduct structured exploration: test with unusual values in the most consequential fields (names, amounts, dates, product identifiers), test with partial information and implicit references, and test with domain-specific terminology your training data may not cover well.

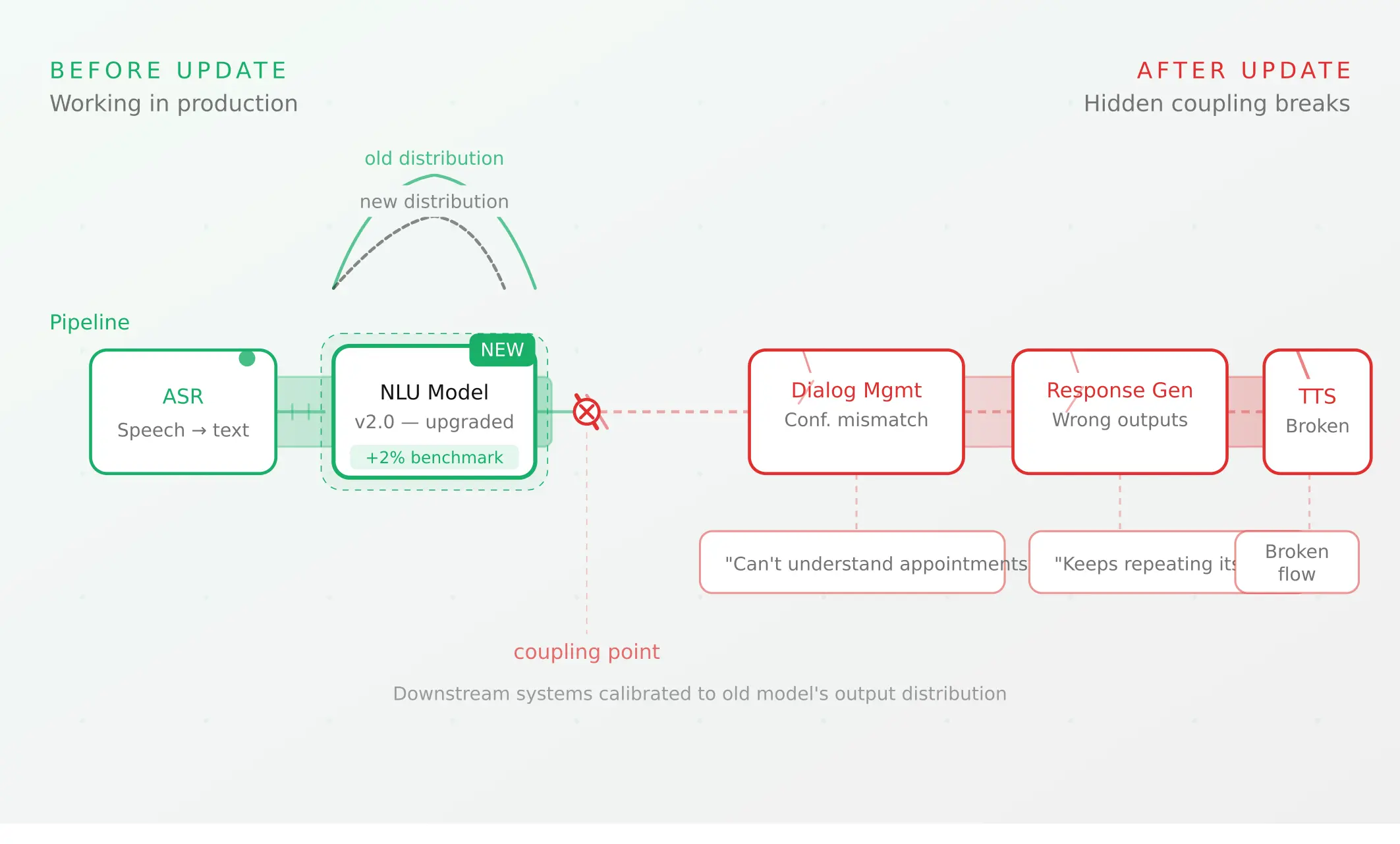

5. Component coupling and production ai behaviour

Voice AI systems are not single models. They are pipelines: ASR converts audio to text, an LLM generates a response, TTS converts the response back to audio, and those components connect to telephony infrastructure, CRM systems, booking APIs, and databases. Each component has its own failure modes. The pipeline introduces failure modes none of the components produce individually.

Understanding AI in production means accepting that AI behavior in real world deployments differs fundamentally from AI behavior in isolated testing environments. AI in real world conditions encounters component interactions, latency variability, and input distributions that unit tests cannot anticipate. An AI voice agent that scores perfectly in evaluation can still fail systemically when its output feeds a downstream component calibrated for a different distribution.

The most common version of this is the coupling problem: downstream components learn to depend on the specific output characteristics of upstream components. When you update one component — switching from Deepgram to AssemblyAI for ASR, or from GPT-4o to Claude for your LLM — the output distribution changes. Confidence scores shift. Response lengths change. Latency profiles differ. Downstream components that were calibrated to the previous distribution begin to fail in ways that neither the old component nor the new component would fail in isolation.

This is why an LLM update that benchmarks higher on accuracy can still crater production metrics — a pattern described in detail in The Regression Problem. The new model is not worse. The pipeline is misaligned.

How to test for this: Component-level testing is necessary but not sufficient. Every component update — not just full model changes, but prompt changes, STT model version bumps, and TTS provider switches — requires end-to-end regression testing on real conversation scenarios. Monitor output distributions at each component boundary over time, not just final task completion rates.

The real cost of the demo-to-production gap

It is worth quantifying what the gap actually costs for AI voice agents in production.

A voice agent handling 10,000 daily calls with a 95% task completion rate generates 500 failed interactions per day. At a typical contact centre average handling time of 8 minutes per escalation, that is 67 hours of human agent time consumed daily by AI failures — roughly equivalent to a full-time support team of eight people. That is before accounting for customer churn, repeat calls, and brand damage.

The behaviour of AI voice agents in the real world is fundamentally different from their behaviour in demos — and closing that gap costs a fraction of what the gap itself costs in production. The teams that treat voice agent production readiness as a pre-deployment gate — not a post-launch firefighting exercise — recover that cost before incurring it.

What voice agent production readiness actually means

Production readiness is not a binary state. It is a property that holds across a defined range of conditions. An AI voice agent is production-ready for a specific deployment context when its performance remains above acceptable thresholds across the realistic range of inputs, acoustic conditions, and conversation patterns it will encounter.

This means production readiness for AI voice agents cannot be determined from demo performance alone. It requires:

1. Define the production distribution. What accents will your users have? What acoustic environments will they call from? What phrasing patterns are common in your domain? What edge cases are statistically likely at your target call volume?

2. Test against that distribution before deployment. Use scenario-based evaluation with synthetic callers that can replicate the realistic range of inputs. Run a minimum of 500 evaluation conversations across the relevant parameter space before any production launch.

3. Establish explicit pass/fail thresholds. Define what acceptable performance means: WER below 12%, task completion above 85%, P95 latency below 800ms (per ITU-T G.114), escalation rate below 15%. Do not deploy until every threshold is met across your target conditions.

4. Build regression infrastructure. Every subsequent change — model updates, prompt edits, STT provider changes, TTS updates — requires re-running your evaluation suite against those thresholds. Use automated evaluation to make this continuous rather than manual.

5. Monitor production against pre-deployment baselines. Your evaluation results become the baseline. If production metrics diverge from them, you have detected a distribution shift and can investigate before it reaches critical failure levels.

The gap between what you measure and what breaks

The most important lesson from hundreds of production voice AI deployments is this: the metrics you measure during testing are almost never the metrics that determine production success.

Teams measure ASR accuracy on clean audio. Production failures come from noisy audio.

Teams measure intent classification on known phrases. Production failures come from unfamiliar phrasing.

Teams measure average task completion. Production failures are concentrated in the long tail.

Teams measure latency in isolation. Production failures come from latency compounding under load.

Transcript analysis using LLM-as-Judge makes this worse by measuring a shadow of the actual conversation — the text representation stripped of timing, acoustic quality, and ASR errors. The score looks good because the transcript is evaluated in ideal conditions, not in the conditions your users experience.

The solution is not to measure more of the same things. It is to measure differently: against realistic acoustic conditions, with real accent diversity, across the full conversational parameter space, and with explicit verification that tasks were actually completed — not just that conversations sounded like they completed.

From demo to production: a five-step evaluation checklist

This framework applies to both initial deployment and every subsequent update:

1. Profile your production distribution. Document the realistic range of accents, acoustic environments, conversation patterns, and edge cases your agent will encounter. Update this profile as your user base grows.

2. Build a golden evaluation set. Create a library of at least 100 scenario conversations with defined expected outcomes — covering happy paths, non-linear paths, edge cases, and failure modes. This set is your ongoing regression baseline.

3. Run evaluation across the full parameter space. For every deployment, test the golden set across your acoustic profiles, accent profiles, and conversational complexity levels. Use automated synthetic callers to make this feasible at the required scale.

4. Gate deployment on thresholds. Define the minimum acceptable performance on each metric — WER, task completion, latency P95, escalation rate — and do not deploy until every threshold is met across every test condition.

5. Convert every production failure into a test case. When a real user has a bad experience, add that call to your regression set. Over time, your evaluation suite evolves from imagined scenarios into a library built from actual production failures, making your testing increasingly grounded in reality.

Voice agent failure patterns by industry

Production failure patterns vary significantly by deployment context. Understanding the domain-specific failure modes your agent will face helps prioritise what to test.

| Industry | Most common failure modes | Key evaluation focus |

|---|---|---|

| Healthcare | Medical terminology WER, patient speech (elderly, distressed), HIPAA compliance edge cases | Terminology accuracy, slow speech patterns, escalation triggers |

| Financial services | Numbers and amounts (high ASR error rate), regulatory disclosure completeness, fraud-edge utterances | Numeric accuracy, disclosure verification, adversarial inputs |

| Retail / e-commerce | Product name extraction, multi-item orders, order correction flows | Entity extraction, correction handling, order state tracking |

| Contact centre (general) | High call volume noise, accent diversity, emotional escalation, hold/transfer patterns | Acoustic robustness, escalation logic, transfer handling |

| India / multilingual | Code-switching (Hinglish, regional languages), local accent diversity, informal register | Multi-language handling, regional ASR accuracy, code-mix intent classification |

Summary

AI voice agents fail in production because controlled testing environments do not replicate the conditions real users create. The five mechanisms responsible are acoustic degradation, accent variation, conversational unpredictability, long-tail edge cases, and component coupling failures. None of these can be identified through demo testing or transcript analysis alone.

Voice agent production readiness is a measurable property, not a feeling of confidence. It requires scenario-based evaluation against a realistic production distribution, explicit pass/fail thresholds for every key metric, and regression infrastructure that treats every update as a potential production risk. The teams that close the demo-to-production gap are the ones that measure what breaks in AI voice agents — not what looks good in a demo.

Frequently asked questions

Why do AI voice agents fail in production even when demos succeed?

Demos use clean audio, cooperative speakers, and scripted conversations. Production introduces accent diversity, background noise, interruptions, and edge cases that demos never replicate. Each factor degrades ASR accuracy, which compounds through intent classification and task completion downstream. The gap between demo performance and production performance is a measurement problem — not a technology limitation.

What is voice agent production readiness?

Voice agent production readiness is the property of performing above defined thresholds across the realistic range of inputs, acoustic conditions, and conversation patterns a deployment will encounter. It requires pre-deployment evaluation against a representative test distribution, explicit pass/fail criteria, and regression testing on every subsequent change. An agent is production-ready when it meets those criteria — not when a demo looks good.

How do voice agents fail with accents?

ASR models trained on limited accent diversity produce higher word error rates for under-represented accents. A 5% WER increase for a specific accent group can produce a 20–30% drop in task completion rate for those users. Testing requires synthetic callers parameterised with the accent profiles present in your user base, with WER and intent accuracy tracked separately per profile.

What is the demo-to-production gap in voice AI?

The demo-to-production gap is the difference between agent performance in controlled conditions (clean audio, scripted conversations, cooperative testers) and performance with real users (noisy environments, accent diversity, non-linear conversations, edge cases at scale). Quantitatively, agents routinely drop 15–25 percentage points in task completion rate between demo and production if no production-aligned evaluation is conducted before launch.

How many test scenarios do I need before deploying a voice agent?

A minimum of 500 evaluation conversations is a practical baseline for initial deployment, covering at least three acoustic profiles, the primary accent groups in your user base, linear and non-linear conversation paths, and the most consequential edge cases for your domain. This number should grow over time as production failures are converted into regression test cases.

What metrics should I track for voice agent production quality?

Track word error rate (WER) per acoustic condition and accent profile, task completion rate, first-call resolution rate, P95 response latency, escalation rate, and error rate. Set explicit thresholds — typical targets are WER below 12%, task completion above 85%, P95 latency below 800ms, escalation below 15% — and alert when any metric deviates more than 5% from your pre-deployment baseline.

How does background noise affect AI voice agent performance?

Background noise degrades ASR accuracy by reducing the signal-to-noise ratio available to the speech recognition model. At contact-centre ambient levels (55–65 dB), transcription accuracy typically drops 15–30% compared to quiet conditions, depending on the ASR provider and codec in use (Deepgram, 2025). This reduction cascades: every intent that is misclassified due to a WER increase becomes a failed task.

What is voice agent regression testing and when is it needed?

Voice agent regression testing re-runs a defined evaluation suite after any system change — model update, prompt edit, STT provider switch, or integration change — to verify that previously working scenarios still pass. It is required on every deployment, not just major releases. A single prompt word change can silently break conversation flows that worked before.

Related Articles

Voice agent regression testing: why LLM updates break production

Updating your LLM improves benchmarks but breaks production voice agents in 5 predictable ways. How to test after every model update and prevent regressions.

Read more

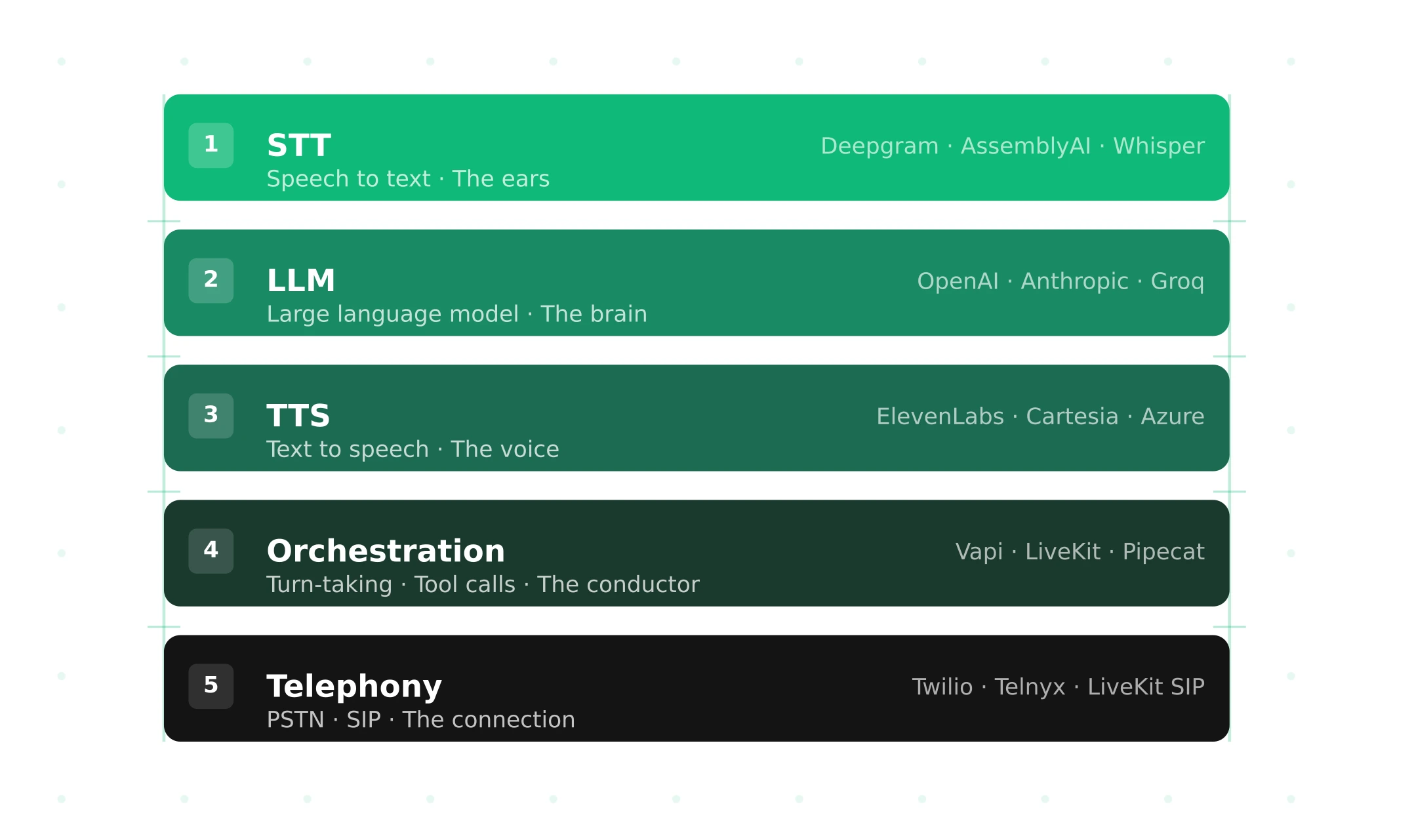

Voice agent stack: the complete guide

Voice agent stack guide: STT, LLM, TTS, orchestration, and telephony. Latency budget, cost per layer, build vs buy, and what to test before production.

Read more